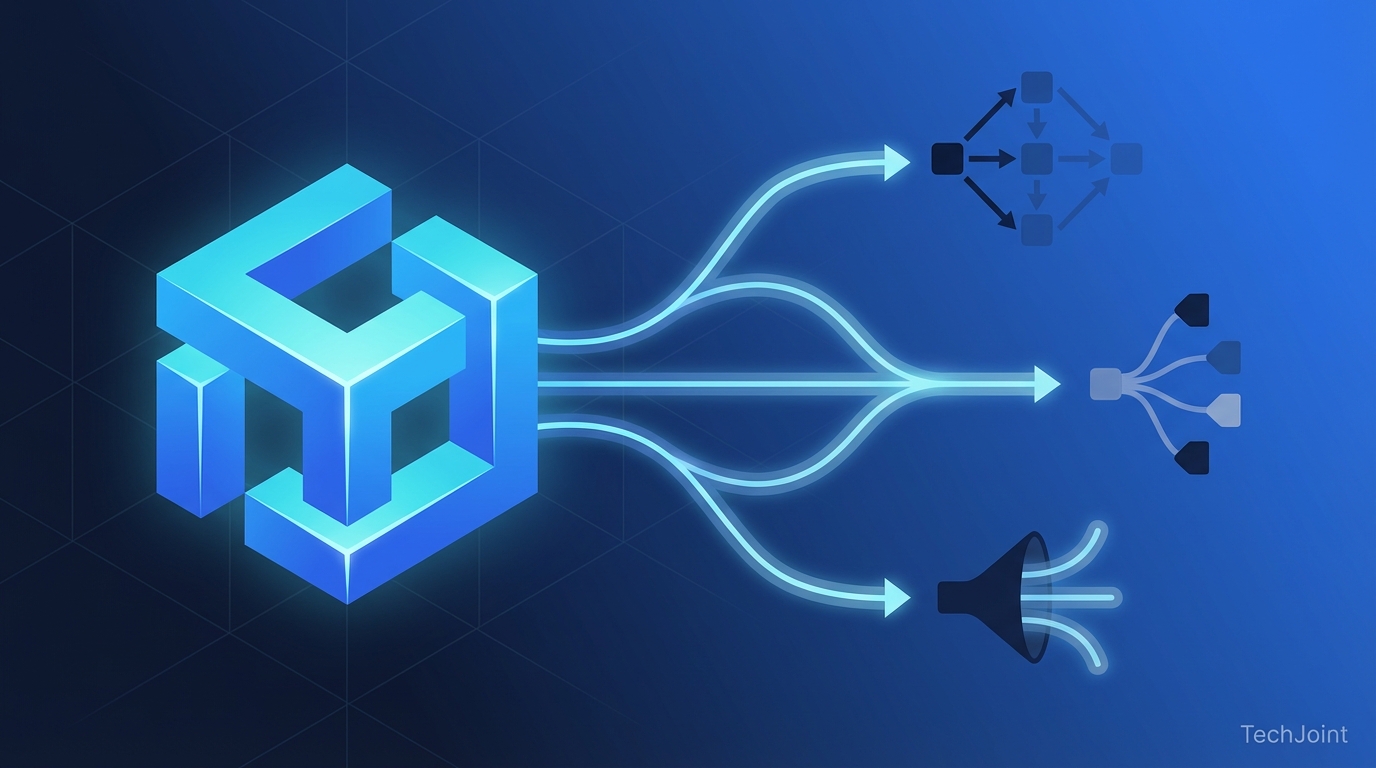

Multi-agent prompt orchestration enables durable orchestration design

Anthropic Claude exemplifies orchestrator DAG execution plans

As a CTO, should I treat the orchestrator as a durable discipline rather than using Zapier or n8n?

A central orchestrator analyzes prompts and produces a Directed Acyclic Graph (DAG) execution plan. A Directed Acyclic Graph (DAG) is a step-by-step execution map that routes subtasks without cycles, defining dependencies and which elements can run in parallel. The original article describes the orchestrator as a coordinating program or model that classifies and routes work and names Anthropic's Claude and the Claude Code skill as practical implementations (Anthropic is named in the original article without a publication year). That source list also references Burnwise, Mirascope, Computer Weekly, and Deloitte to draw an analogy between technical layers and human hierarchies, but the original citations for Computer Weekly and Deloitte do not include report titles or publication years. The original content contrasts the orchestration discipline with workflow tools such as n8n and Zapier and positions orchestration as a durable practice rather than disposable middleware. The original article does not provide a computational latency statistic for the orchestrator, and that absence is preserved here rather than invented, so engineers should measure latency empirically for models and skills. Systems engineers should therefore prioritize reliable plan generation, explicit routing contracts, and testable classification passes in the orchestrator implementation as the durable design value emphasized by the original piece. The original piece frames the orchestrator role as analogous to individual contributors and directors in an organization chart, mapping human roles to technical stages as claimed by the cited sources.

What should engineers prioritize when implementing an orchestrator like Claude Code?

A central orchestrator analyzes prompts and produces a Directed Acyclic Graph (DAG) execution plan. A Directed Acyclic Graph (DAG) is a step-by-step execution map that routes subtasks without cycles, defining dependencies and which elements can run in parallel. The original article describes the orchestrator as a coordinating program or model that classifies and routes work and names Anthropic's Claude and the Claude Code skill as practical implementations (Anthropic is named in the original article without a publication year). That source list also references Burnwise, Mirascope, Computer Weekly, and Deloitte to draw an analogy between technical layers and human hierarchies, but the original citations for Computer Weekly and Deloitte do not include report titles or publication years. The original content contrasts the orchestration discipline with workflow tools such as n8n and Zapier and positions orchestration as a durable practice rather than disposable middleware. The original article does not provide a computational latency statistic for the orchestrator, and that absence is preserved here rather than invented, so engineers should measure latency empirically for models and skills. Systems engineers should therefore prioritize reliable plan generation, explicit routing contracts, and testable classification passes in the orchestrator implementation as the durable design value emphasized by the original piece. The original piece frames the orchestrator role as analogous to individual contributors and directors in an organization chart, mapping human roles to technical stages as claimed by the cited sources.

Mixture-of-Experts informs two-dimensional perspective routing

How should architects treat capacity metrics versus perspective labels when designing routing tables?

Two-dimensional routing uses both model capacity and analytical perspective to assign tasks. Perspective routing, defined here as routing by analytical orientation, sends tasks to critic, synthesis, or judge agents rather than routing only by raw model capability. Mixture-of-Experts (MoE) is defined as an architecture where a gating network routes inputs to a subset of expert layers instead of executing the full model on every token, and the original article links this idea to agent-level routing. The original article cites arXiv entries, a Maarten Grootendorst newsletter, and a Frontiers file (public-pages-files-2025.frontiersin) when discussing perspective routing, but those entries in the source list are presented without formal paper titles. The original material names Mixture-of-Experts discussions and newsletter.maartengrootendorst as conceptual sources for agent-level gating while avoiding any invented cost quantification between one-dimensional capacity routing and two-dimensional perspective routing. Designers should therefore implement explicit role contracts for critic, synthesis, and judge agents and treat capacity metrics and perspective labels as orthogonal axes in routing tables. The original article also references Mixture-of-Experts analogies alongside mentions of Burnwise, Mirascope, and Anthropic in routing examples, again without adding formal bibliographic metadata. This two-axis routing pattern is presented as an orchestration-layer analogue to internal MoE gating rather than a claim about specific model latency or cost unless those figures are supplied by a cited study.

How do I implement perspective routing to send tasks to critic, synthesis, or judge agents?

Two-dimensional routing uses both model capacity and analytical perspective to assign tasks. Perspective routing, defined here as routing by analytical orientation, sends tasks to critic, synthesis, or judge agents rather than routing only by raw model capability. Mixture-of-Experts (MoE) is defined as an architecture where a gating network routes inputs to a subset of expert layers instead of executing the full model on every token, and the original article links this idea to agent-level routing. The original article cites arXiv entries, a Maarten Grootendorst newsletter, and a Frontiers file (public-pages-files-2025.frontiersin) when discussing perspective routing, but those entries in the source list are presented without formal paper titles. The original material names Mixture-of-Experts discussions and newsletter.maartengrootendorst as conceptual sources for agent-level gating while avoiding any invented cost quantification between one-dimensional capacity routing and two-dimensional perspective routing. Designers should therefore implement explicit role contracts for critic, synthesis, and judge agents and treat capacity metrics and perspective labels as orthogonal axes in routing tables. The original article also references Mixture-of-Experts analogies alongside mentions of Burnwise, Mirascope, and Anthropic in routing examples, again without adding formal bibliographic metadata. This two-axis routing pattern is presented as an orchestration-layer analogue to internal MoE gating rather than a claim about specific model latency or cost unless those figures are supplied by a cited study.

Expert fan-out uses purpose-built agents as in Hugging Face examples

What governance should teams set for judge roles and iterative fan-out in expert councils?

Expert fan-out dispatches tasks concurrently or sequentially to purpose-built expert agents. An expert agent, defined here as a purpose-built model or skill assigned a specific role, receives a global context brief and a role-specific instruction to produce a constrained output. The original article enumerates a standard council including a retrieval agent, critic agent, synthesis agent, domain specialist (legal, financial, or technical), and a judge or evaluator, and it cites Edison and Black and SWFTE (acronym used in the original article without expansion) on fan-out patterns. The original content also references Hugging Face when discussing the Large Language Model-as-a-Judge pattern but provides no scoring-algorithm details in the source list. The piece stresses that expert agents work without mutual awareness during execution, analogous to blind peer reviewers, which is why the global context brief and strict output formats are necessary to avoid disjointed outputs. Implementers should specify output schemas, citation and provenance requirements, and format constraints for retrieval, synthesis, critic, and domain-specialist agents so aggregation can reconcile divergent outputs. The original article names platforms and sources such as Burnwise, Mirascope, Edison and Black, and Hugging Face in examples of agent roles but does not supply method-level scoring formulas for judge models. Therefore teams should treat the judge role as a rubric-driven evaluator that can trigger iterative fan-out when criteria are unmet, implementing the retrospective engine pattern described in the cited material.

What output schemas and constraints must be specified for retrieval and synthesis agents?

Expert fan-out dispatches tasks concurrently or sequentially to purpose-built expert agents. An expert agent, defined here as a purpose-built model or skill assigned a specific role, receives a global context brief and a role-specific instruction to produce a constrained output. The original article enumerates a standard council including a retrieval agent, critic agent, synthesis agent, domain specialist (legal, financial, or technical), and a judge or evaluator, and it cites Edison and Black and SWFTE (acronym used in the original article without expansion) on fan-out patterns. The original content also references Hugging Face when discussing the Large Language Model-as-a-Judge pattern but provides no scoring-algorithm details in the source list. The piece stresses that expert agents work without mutual awareness during execution, analogous to blind peer reviewers, which is why the global context brief and strict output formats are necessary to avoid disjointed outputs. Implementers should specify output schemas, citation and provenance requirements, and format constraints for retrieval, synthesis, critic, and domain-specialist agents so aggregation can reconcile divergent outputs. The original article names platforms and sources such as Burnwise, Mirascope, Edison and Black, and Hugging Face in examples of agent roles but does not supply method-level scoring formulas for judge models. Therefore teams should treat the judge role as a rubric-driven evaluator that can trigger iterative fan-out when criteria are unmet, implementing the retrospective engine pattern described in the cited material.

JSON shared state graphs in SuprMind examples enable context flow

How must designers decide what agents can read and write in a JSON shared state graph?

Context management moves information between agents using sequential chaining, parallel fan-out, and shared state graphs. Sequential chaining injects one agent's output into the next agent's prompt as context, while parallel fan-out runs independent agents from the same global brief and waits for aggregation. A shared state graph, defined here as a centralized structured payload that agents read from and write to, is commonly implemented as a JSON (JavaScript Object Notation) document that preserves structured memory and provenance. The original article cites SuprMind for parallel fan-out, SWFTE for sequential patterns, and Mirascope for shared state graph examples, but those entries in the source list are not accompanied by full publication metadata. Under the JSON shared state model the original piece explains retrieval agents and synthesis agents iteratively update the central payload to enable multi-round refinement and memory across agent interactions. That original text does not specify token limits or maximum state sizes for the shared state graph, and this omission is explicitly preserved here rather than supplemented with invented figures. Designers must therefore decide what each agent can read and write, how to compress or shard JSON state for token-limited models, and how SuprMind-style parallel patterns interact with sequential chains. Deliberate architectural choices about context flow—naming what each agent sees, in what form, and when—determine whether aggregation will produce coherent synthesized outputs in systems mentioned in the original sources.

How do I combine SuprMind-style parallel fan-out with sequential chaining using JSON state?

Context management moves information between agents using sequential chaining, parallel fan-out, and shared state graphs. Sequential chaining injects one agent's output into the next agent's prompt as context, while parallel fan-out runs independent agents from the same global brief and waits for aggregation. A shared state graph, defined here as a centralized structured payload that agents read from and write to, is commonly implemented as a JSON (JavaScript Object Notation) document that preserves structured memory and provenance. The original article cites SuprMind for parallel fan-out, SWFTE for sequential patterns, and Mirascope for shared state graph examples, but those entries in the source list are not accompanied by full publication metadata. Under the JSON shared state model the original piece explains retrieval agents and synthesis agents iteratively update the central payload to enable multi-round refinement and memory across agent interactions. That original text does not specify token limits or maximum state sizes for the shared state graph, and this omission is explicitly preserved here rather than supplemented with invented figures. Designers must therefore decide what each agent can read and write, how to compress or shard JSON state for token-limited models, and how SuprMind-style parallel patterns interact with sequential chains. Deliberate architectural choices about context flow—naming what each agent sees, in what form, and when—determine whether aggregation will produce coherent synthesized outputs in systems mentioned in the original sources.

Gartner 2027 forecast stresses aggregation and judge feedback loops

Where should leaders invest when deploying aggregation layers and judge feedback loops?

The aggregation layer synthesizes agent outputs into a final answer and enables judge feedback loops. An aggregation instruction, defined here as the contract-like prompt that tells the synthesizer how to weigh disagreements, resolve conflicts, and format the final output, must be crafted precisely. The original article cites arXiv for aggregation best practices and names a Gartner 2027 forecast claiming seventy percent of multi-agent systems will use narrow specialized agents, while noting the Gartner entry in the source list lacks a report title. International Business Machines Corporation (IBM) and Deloitte are named in the original content to describe the prompting shift from persona-based prompts to contract-style interface specifications, but the original source entries for IBM and Deloitte do not include report titles or years. The original piece contrasts durable platforms such as HubSpot and GoHighLevel with depreciating middleware like Zapier and n8n to illustrate what persists and what does not, and those platform names appear in the source material. The TechJoint and Humans Amplified consulting narratives are presented in the original article as strategic adoption windows for consulting firms, though the author did not provide pricing models or case-study metadata in the source list. In multi-round systems the aggregation output is evaluated by a judge model which can route unmet criteria back through the council for iteration, implementing the retrospective engine pattern referenced in sources like Hugging Face and Edison and Black. Leaders and architects should therefore invest in designing evaluation rubrics, explicit aggregation instructions, and orchestration director roles as the durable discipline that outlasts specific tools and models named in the original article.

What should an aggregation instruction include to enable judge-triggered iterations?

The aggregation layer synthesizes agent outputs into a final answer and enables judge feedback loops. An aggregation instruction, defined here as the contract-like prompt that tells the synthesizer how to weigh disagreements, resolve conflicts, and format the final output, must be crafted precisely. The original article cites arXiv for aggregation best practices and names a Gartner 2027 forecast claiming seventy percent of multi-agent systems will use narrow specialized agents, while noting the Gartner entry in the source list lacks a report title. International Business Machines Corporation (IBM) and Deloitte are named in the original content to describe the prompting shift from persona-based prompts to contract-style interface specifications, but the original source entries for IBM and Deloitte do not include report titles or years. The original piece contrasts durable platforms such as HubSpot and GoHighLevel with depreciating middleware like Zapier and n8n to illustrate what persists and what does not, and those platform names appear in the source material. The TechJoint and Humans Amplified consulting narratives are presented in the original article as strategic adoption windows for consulting firms, though the author did not provide pricing models or case-study metadata in the source list. In multi-round systems the aggregation output is evaluated by a judge model which can route unmet criteria back through the council for iteration, implementing the retrospective engine pattern referenced in sources like Hugging Face and Edison and Black. Leaders and architects should therefore invest in designing evaluation rubrics, explicit aggregation instructions, and orchestration director roles as the durable discipline that outlasts specific tools and models named in the original article.